Additionally, due to the smaller size of the available text, smoothing proved to be more important. Unfortunately, this results in a significantly smaller corpus than the giant Google database, but still proved to work for the most part. I found several other large pieces of text and combined them all to create my own bigram probabilities with the help of the NLTK toolbox. Originally, I was planning on using requests to Google's N-gram database however, this failed because Google cut me off (Error 429) from performing too many requests. In order to do this we want to look at the 2 bigrams from either side of the candidate word - P(word | prevWord) and P(nextWord | word). Next we must take the dictionary model into account. also provides a count of all two-letter-grams allowing us to calculate a P(x|w) where $w$ is the actual word and x is the word with noise. This is done using data from which provides the number of each specific edit (for example the number of times ie is typed as ei: ei|ie). For each specific edit, the probability of each edit is calculated. If there were reason to assume that the channel was particularly ``noisy,'' the same algorithm could be used to add all words an additional edit-distance away to the candidate list.Īfter a list of candidate words is generated, each of these words is plugged into the model discussed above. This project used one edit-distance as the maximum because in general, about 80% of all spelling mistakes could be found in that one edit-distance. The first step in the spelling correction process was to produce all words within one edit-distance of each of the words from the input sentence. Using a bigram or trigram will give us context and allow us to more intelligently determine the actual word. We can look at the probability of a single word showing up, or we can look at the probability of some N-gram showing up. The dictionary model can also take a simpler or more complicated form. For example, we would say that a substitution of keys that neighbor each other is more likely than other substitutions. Another option is to develop some heuristic to explain which mistakes are more likely. One option is to use some training set of labeled mistakes in order to determine which mistakes are more likely. However, we can also approach the probability more specifically.

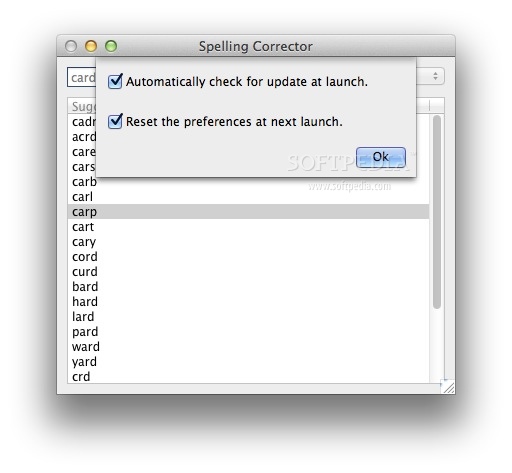

In its simplest form, the channel model will give a higher probability for the lowest number of edits (where an edit is an insertion, deletion, substitution, or transposition). The dictionary model tells us the probability of a specific word (or N-gram) w showing up. The channel model tells us the probability that a specific word could have turned into the received word, x. This can be described as a product between the channel model and the dictionary model. Using Baye’s Rule we can equivalently maximize the product P (x|w)P (w). In order to predict what the intended word is, we want to maximize the probability P (w|x), where w is the actual word and x is the noisy word that the algorithm receives. For example, "fifteen minutes" might be mistyped as "fifteen minuets" - Depending on context, "fifteen minutes" is probably the desired phrase. The goal of this project was to be able to find and correct words that were mistyped, even if the typed word is a word in the dictionary. This program will be able to correct spelling mistakes even if the word is a real dictionary word, but is used in an incorrect context. A spelling corrector based on the noisy channel model.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed